A quick, easy and fun collaboration with school friend, composer, keyboardist and drummer Stefan Melzak led to a release of Sixties Jazz Grooves with the great French label MYMA

Author: miltonline

Blue & Yellow

Colours of the Ukraine Flag making their own music.

The Sound Asleep project was presented with Professor Debra Skene in the World Sleep Congress on March 11 2022, Rome, Italy,

World Sleep 2022 is a global scientific congress bringing the best of sleep medicine and research to Rome, Italy, March 11–16, 2022. The World Sleep congress, now in its 16th iteration, consistently gathers the best minds in sleep medicine and research for multiple days of scientific sessions and networking.

Sound Asleep has now been presented at British Sleep Society, European Sleep Research Conference and World Sleep Congress engaging with hundreds of members of the International Sleep Community.

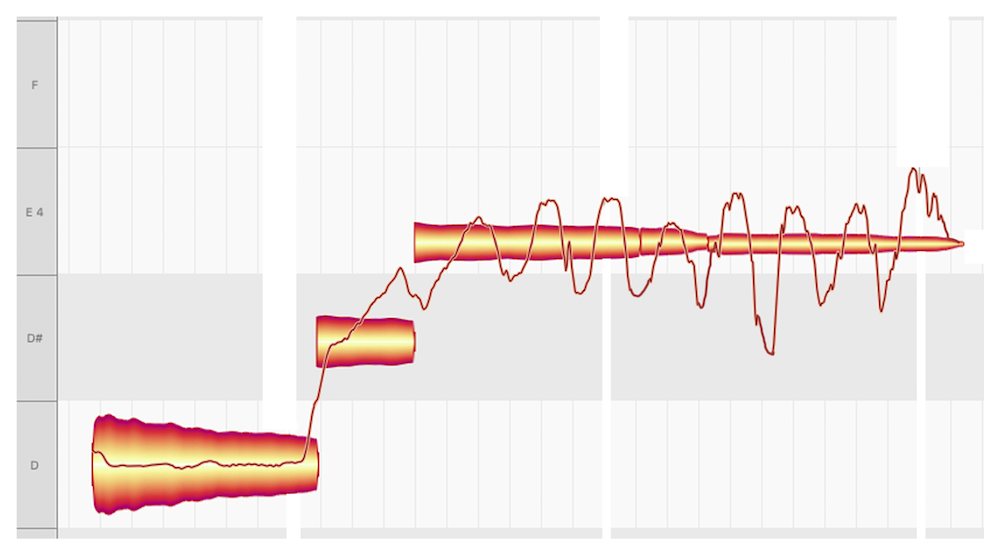

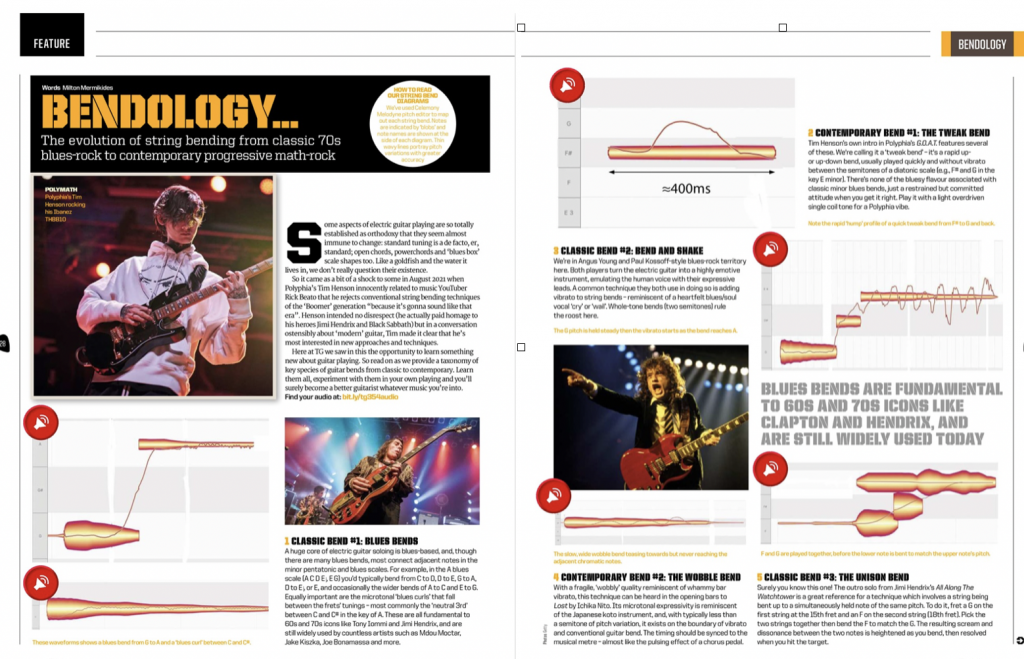

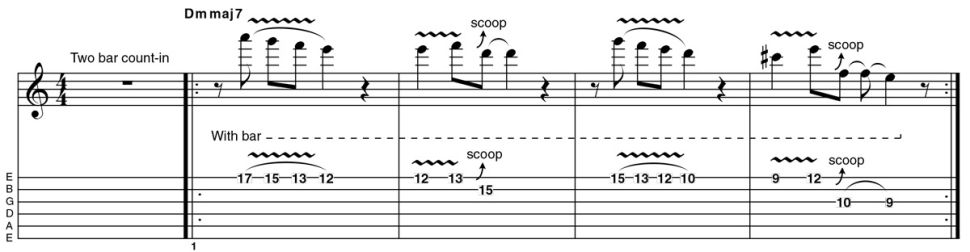

Bendology in Total Guitar

It was fun putting together this article with Chris Bird for Total Guitar (Feb 2022), and using the whole BoomerBendGate as an opportunity for some bendological research. In so doing discovered some ridiculously killer zoomer players in Tim Henson, Mateus Asato, Wes Hauch, Ichiko Nito, Plini and all.

And is now published online in Guitar World

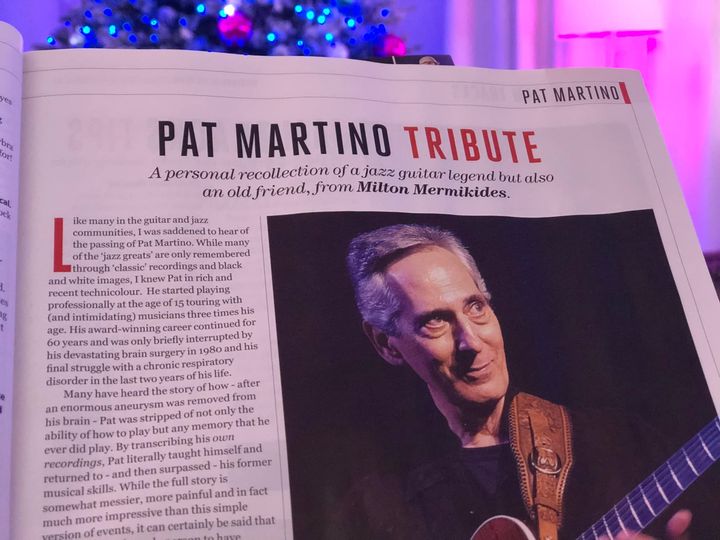

Pat Martino GT Tribute

Super happy to be made an Ableton Certified Trainer, and join a wonderful international community of artist-educator-technologists from 56 countries. Just 8 of us from the UK were selected since 2013, so it’s a real privilege. I am of course a technophile (=nerd), but I have a particular love for Ableton Live (and Push) which – now with Max for Live – is incredibly open, flexible, and linked to diverse forms of historical and contemporary music making in composition, performance, production, and programming. I genuinely love Live and Ableton and relate to their musical ethos deeply.

John Mayer in GT

My article on the remarkable John Mayer’s Blues techniques is the cover feature of Guitar Techniques GT328. Read it and (make your guitar) weep.

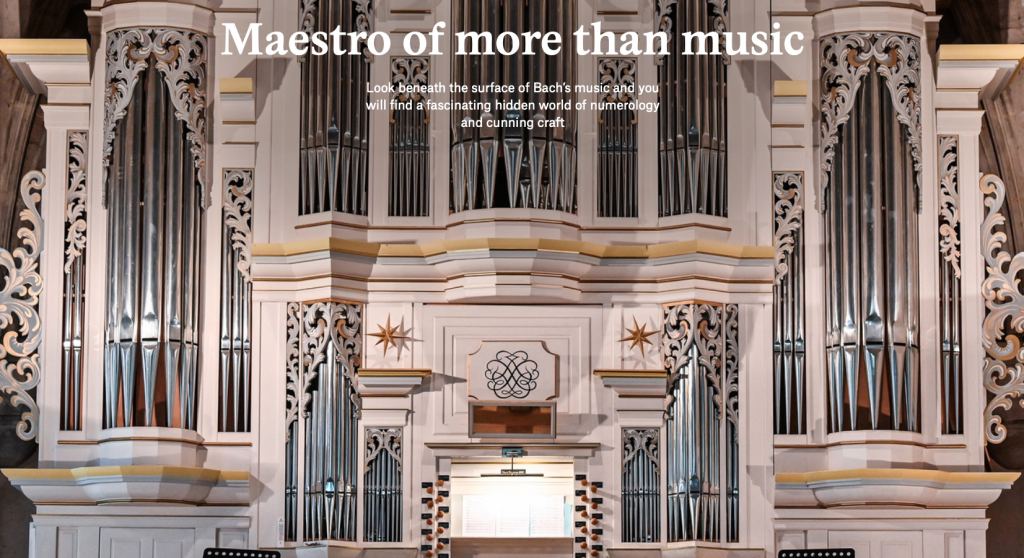

J.S.Bach in Aeon

My essay on J.S.Bach’s crafty brilliance and its implications for us all is now published in the wonderful Aeon digital magazine. Many thanks to Nigel Warburton (author, philosopher and podcaster) for the commissioning, editing and constant support.

https://aeon.co/essays/look-into-the-secret-world-of-numerology-and-puzzles-in-bach

The wonderful New Zealand classical guitarist and professor Matthew Marshall is touring Insighted in his 12-date tour around NZ and Australia (July-August 2021) in a super eclectic programme. Sounds great in rehearsals, what fun. Score now available.

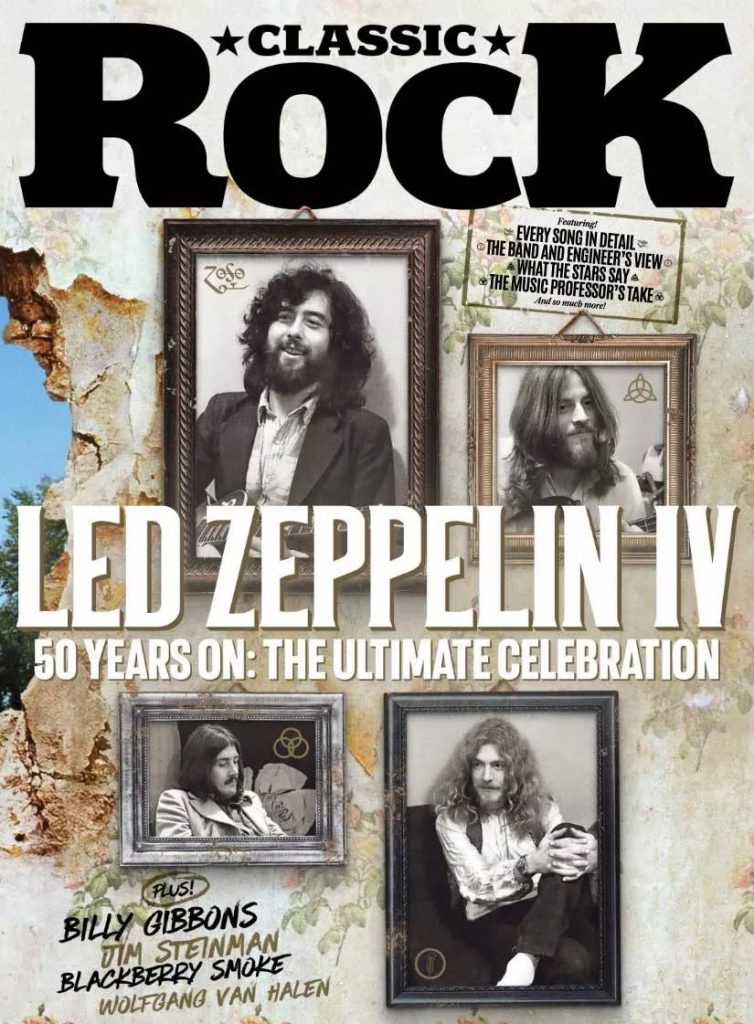

It was fun writing this track-by-track musicological analysis of Led Zep IV (50 this year ahem) for the cover feature of Classic Rock Magazine. Limited by copyright and terminology, it’s challenging and instructive to communicate deep theory without dumbing down. Also I now know how to hear the beginning of Rock n Roll and the bridge of Stairway. Get it here and all reasonable shops.

Hidden Music featured in the wonderful New Scientist Podcast

Lessons from Eurovision

Total Guitar (Issue 345) has published an article on what Eurovision teaches us about music listening, and (guitar) performance. Some sample images below on rhythmic, perception, tempo and modulation respectively.

Eurometal in Guitar World

My Total Guitar article is here re-adapted and syndicated in Guitar World. The intersection of Metal and Eurovision – Eurometal.

My article providing practical insights into the music and playing of the late, great Allan Holdsworth is now available for public free consumption on MusicRadar (including notation & audio). A teaser below.

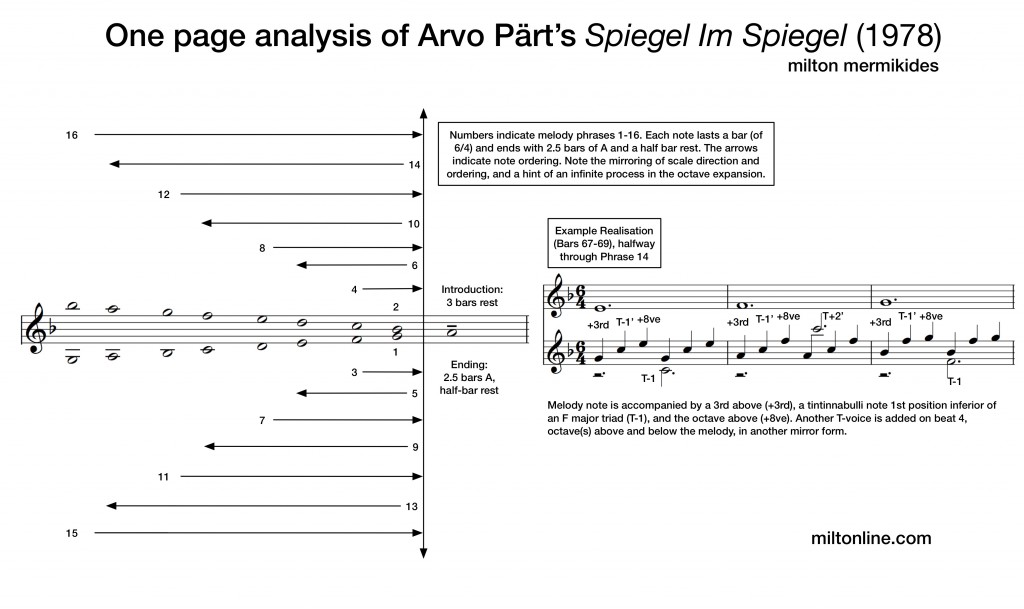

Musical illustrations of Arvo Pärt’s Spiegel im Spiegel and Beatitudes are now published in Arvo Pärt’s Resonant Texts: Choral and Organ Music 1956–2015 (Shenton 2018) and Illiano, R., & Locanto, M. (Eds.). (2019). Twentieth-century music and mathematics. Brepols.

These unpack the musical mechanisms of Arvo Pärt’s music as outlined in this lecture below.

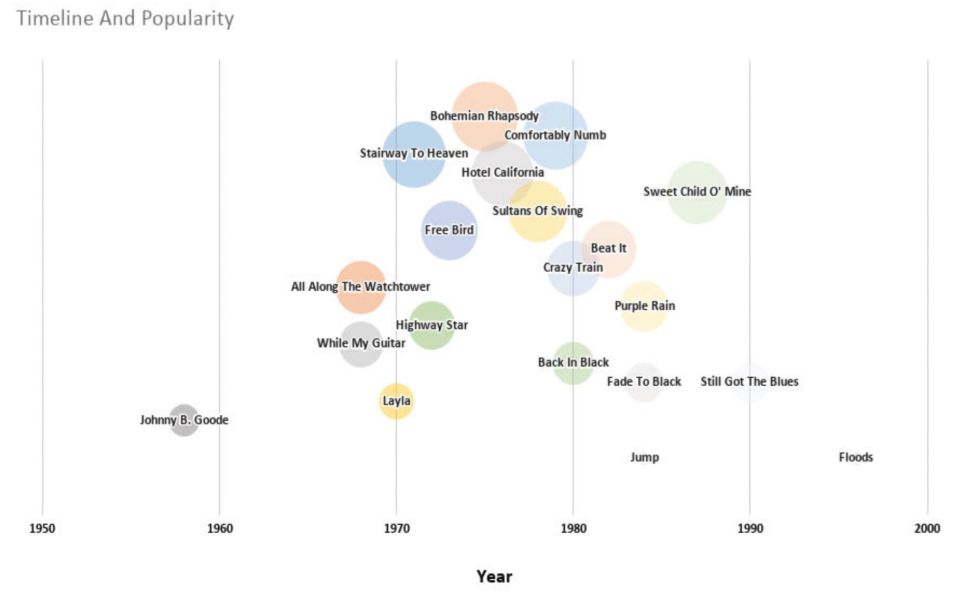

Anatomy of a Guitar Solo

Originally commissioned for Total Guitar, this examination of the reader-voted top 50 guitar solos is now publicly available on Guitar World.

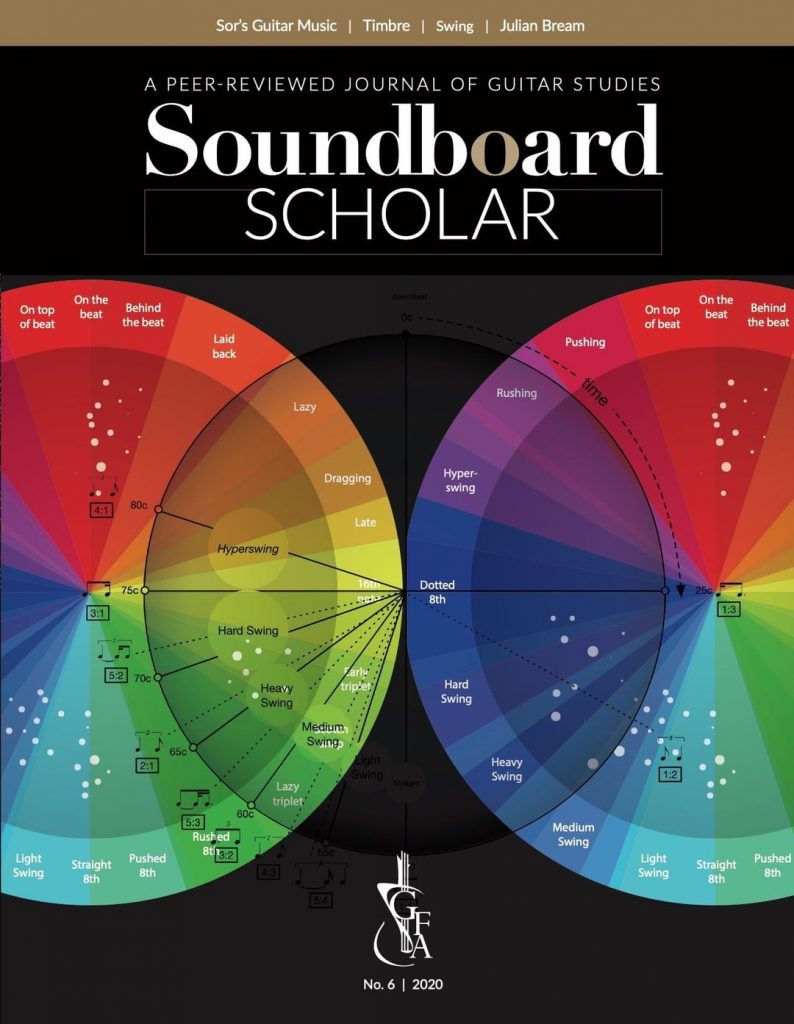

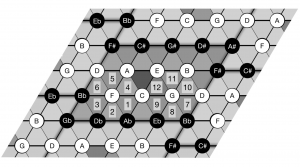

Engaging directly with time-feel and micro-timing on the electric guitar with specific exercises and style studies. This is for many levels of player who wants to draw focus to the elements of groove which are often excluded, glossed over or mythologized in the learning process.

A live video presentation at the fantastic 21st Century Guitar Conference “in” Lisbon, March 2021, hosted by the wonderful Amy Brandon and Rita Torres. ‘Digital Self-Sabotage’ explores we guitarists’ deep and twisted engagement with the fretboard, and how technology can expand and disrupt this bond for learning and insight.

Soundboard Scholar (No.6) features my paper. “Monitored Freedom: Swing Rhythm in the Jazz Arrangements of Roland Dyens” examines the time-feel in the performances and scores of Roland Dyens, in particular reference to his arrangement of Nuages – and Django’s performances of this piece. Working with the genius Jonathan Leathwood is always a privilege and joy, and I am very grateful that my illustration is used as the cover image to the journal. Available here.